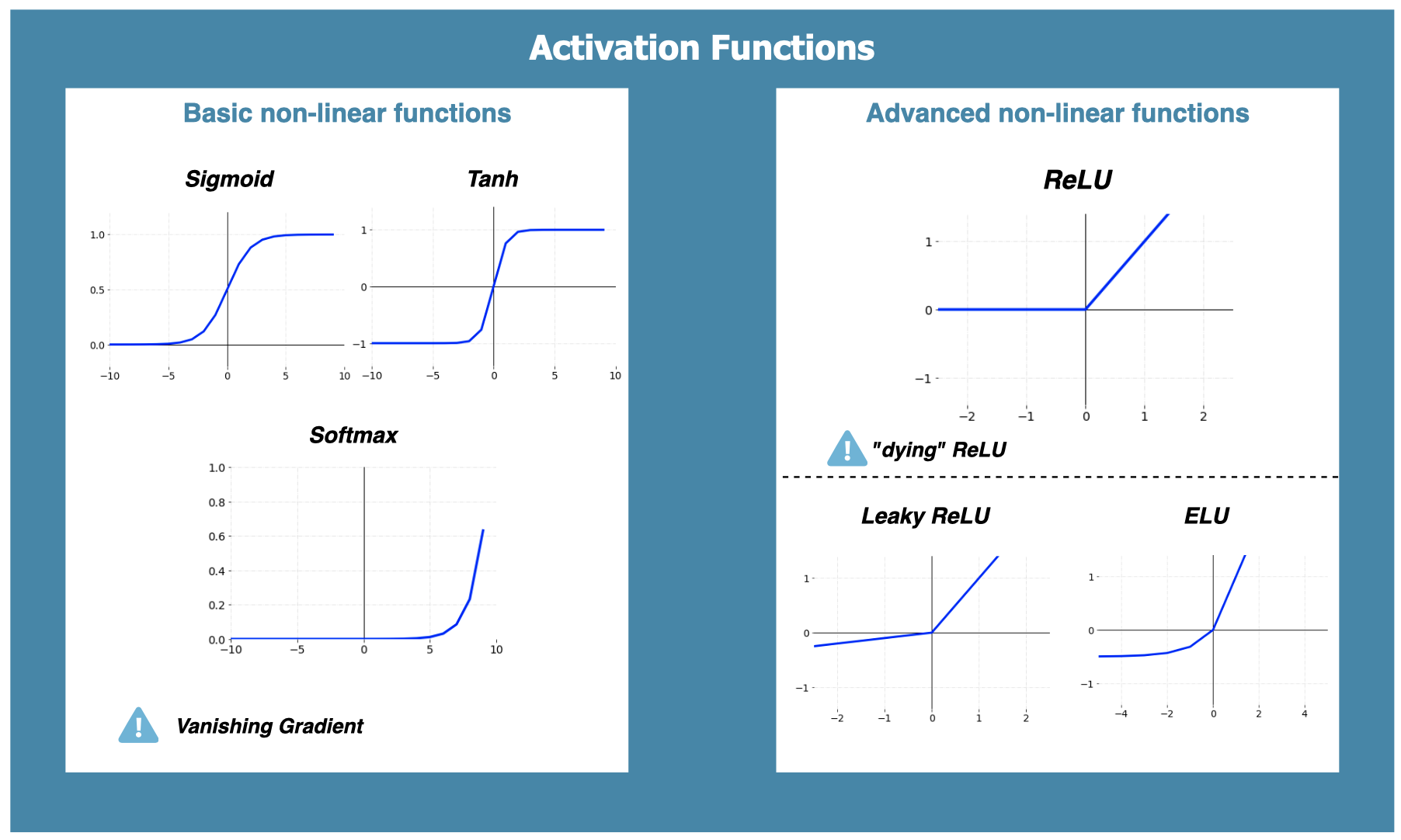

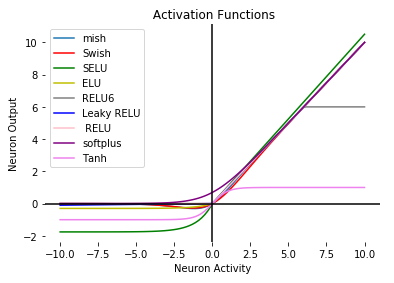

How to Choose the Right Activation Function for Neural Networks | by Rukshan Pramoditha | Towards Data Science

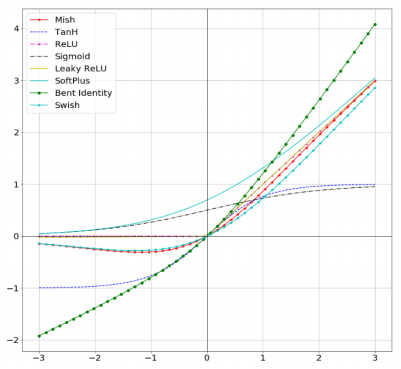

Meet Mish: New Activation function, possible successor to ReLU? - fastai users - Deep Learning Course Forums

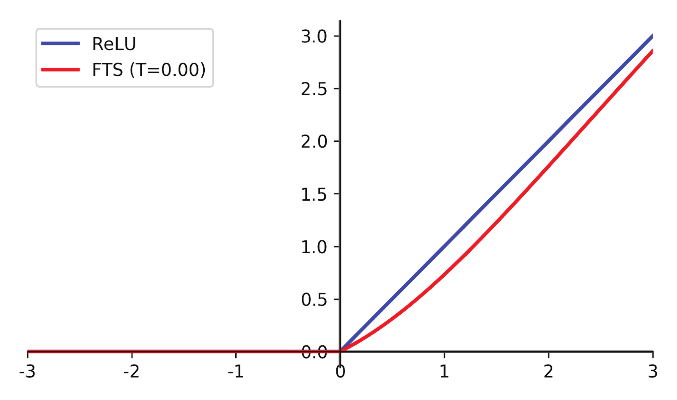

Flatten-T Swish: A Thresholded ReLU-Swish-like Activation Function for Deep Learning | by Joshua Chieng | Medium

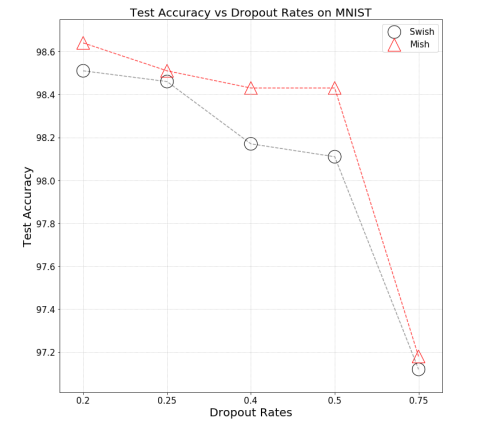

Swish Vs Mish: Latest Activation Functions – Krutika Bapat – Engineering at IIIT-Naya Raipur | 2016-2020

Advantages of ReLU vs Tanh vs Sigmoid activation function in deep neural networks. - Knowledge Transfer

Swish Vs Mish: Latest Activation Functions – Krutika Bapat – Engineering at IIIT-Naya Raipur | 2016-2020

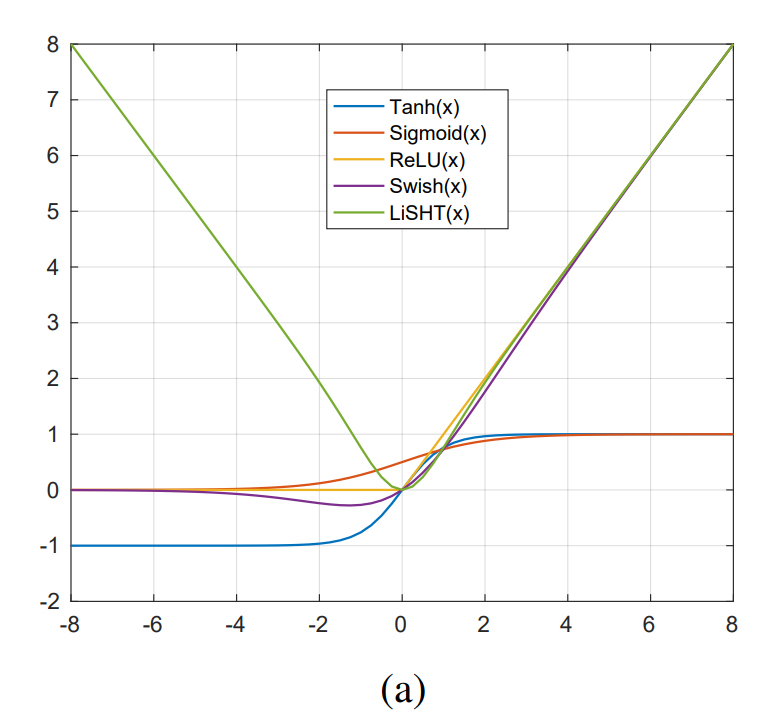

LiSHT (linear scaled Hyperbolic Tangent) - better than ReLU? - testing it out - Part 2 (2019) - Deep Learning Course Forums

What makes ReLU so much better than Linear Activation? As half of them are exactly the same. - Quora

Different Activation Functions for Deep Neural Networks You Should Know | by Renu Khandelwal | Geek Culture | Medium

![Different Activation Functions. a ReLU and Leaky ReLU [37], b Sigmoid... | Download Scientific Diagram Different Activation Functions. a ReLU and Leaky ReLU [37], b Sigmoid... | Download Scientific Diagram](https://www.researchgate.net/publication/339905203/figure/fig3/AS:868603377225728@1584102591508/Different-Activation-Functions-a-ReLU-and-Leaky-ReLU-37-b-Sigmoid-Activation-Function.png)